- Concept Discovery: An LLM proposes class-discriminative attributes, such as "long beak" for a bird, to identify key semantic features.

- Spatial Grounding: GroundedSAM spatially localizes these concepts within images to generate dynamic, adaptive guidance masks.

- Semantic Alignment: The model's LRP (fulfills conservation) relevance maps are optimized to align with these concept regions while simultaneously suppressing focus on spurious background cues.

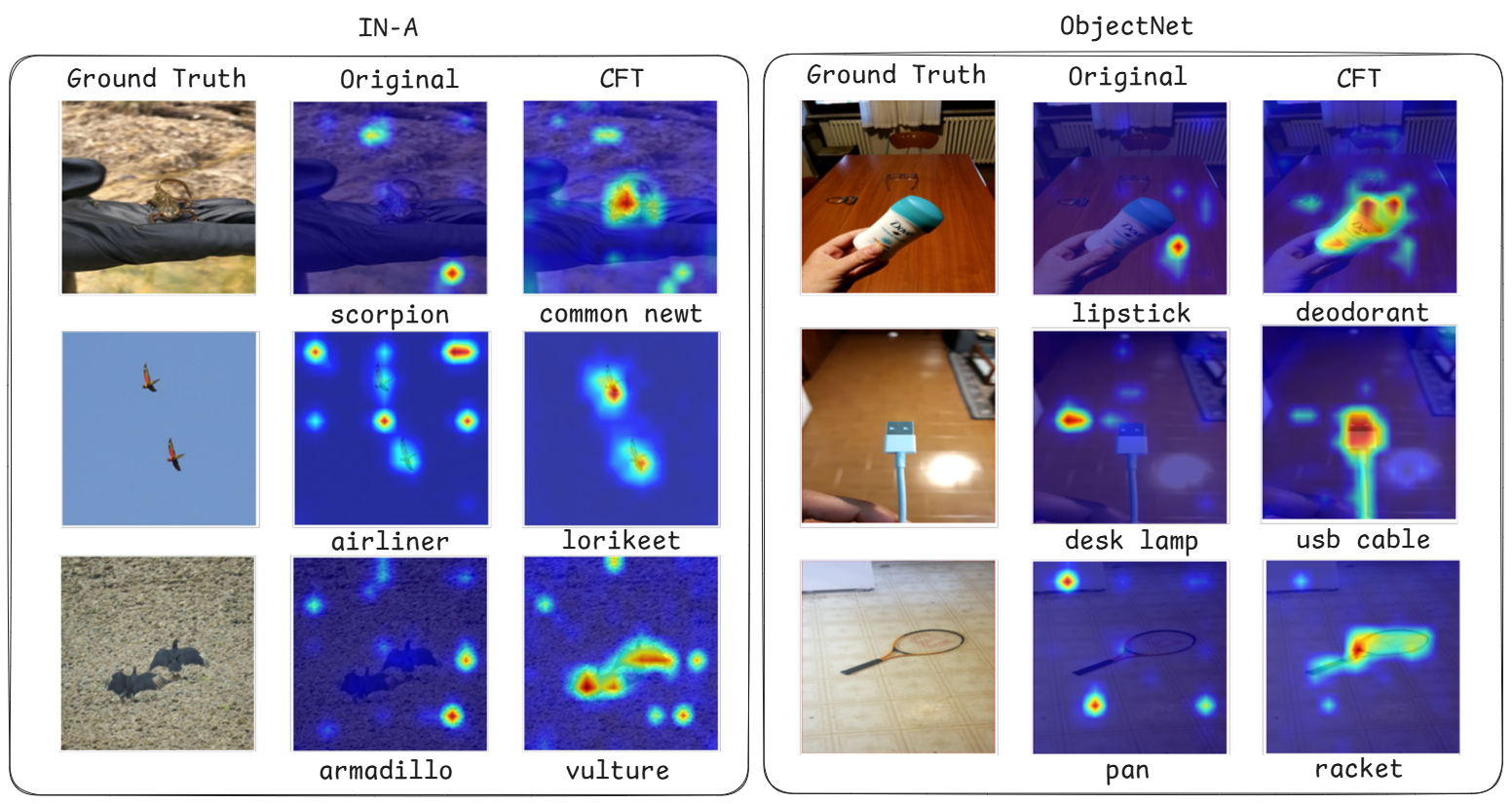

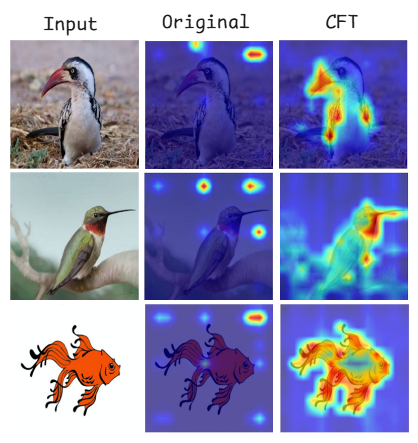

The Problem: Standard ViTs often fixate on backgrounds (middle). The Solution: CFT steers the model to focus on fine-grained semantic concepts (e.g., beaks, wings, fins), leading to massive gains in out-of-distribution robustness.